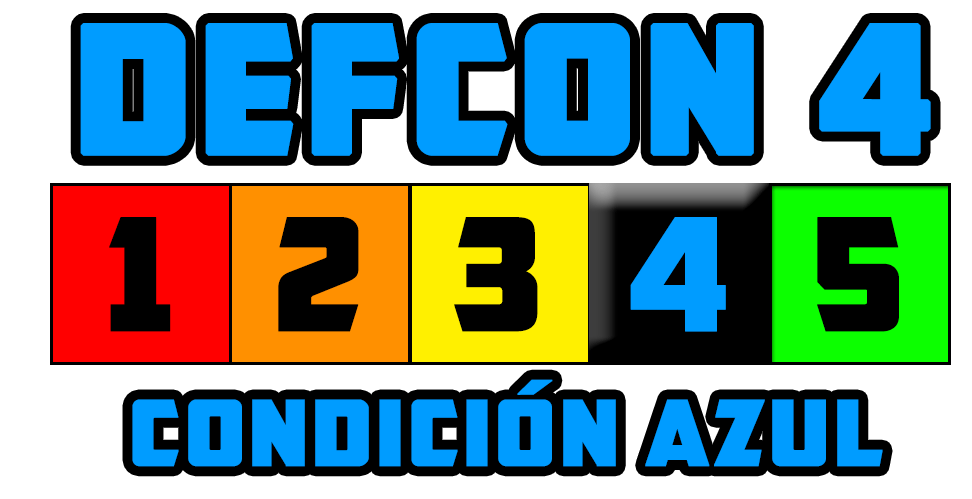

The event succeeded in bringing the traditional information security and machine learning communities together to tackle a range of challenges from this new domain of ML security.Ĭompetitors used publicly available tools and innovative technique applications such as open source research, masking, and dimensionality reduction. This far exceeded expectations and included participants from over 70 countries, from first-time Kagglers to Grandmasters. Over the month-long competition, over 3,000 competitors hacked their way through 22 challenges. We could not have asked for a better partner for this event. Kaggle also generously offered ongoing support and $25,000 in prizes. Furthermore, Kaggle has a large audience of skilled data scientists and machine learning engineers who were excited to explore the security domain. Although the challenge servers are no longer active, you can view the challenge descriptions.Ĭompetitors reported onboarding and moving through the challenges with ease, with minimal additional infrastructure required from the AI Village. Partnering with Kaggle provided the competition with a flexible and scalable platform that paired compute and data hosting with documentation and scoring. Similar to information security CTFs, Kaggle competitions provide a format for ML researchers to compete on discrete problems. Organizers partnered with Kaggle to use a platform familiar to the machine learning community. With this familiar format in mind, the AI Village and NVIDIA AI Red Team built The AI Village CTF DEFCON. Competitors win by collecting the most points. These flags are assigned various point values based on the level of challenge. Competitors play through the challenges and collect flags for those that are completed successfully. AI Village Capture the Flag CompetitionĬapture the Flag (CTF) competitions include multiple challenges. In addition to NVIDIA, these members represented AWS Security, Orang Labs, and NetSec Explained. Members of the AI Village created challenges designed to teach and test elements of ML security knowledge. The topic was potentially new to many attendees.

The NVIDIA AI Red Team and AI Village joined together at DEF CON 30 to engage the information security community with a machine learning security competition. The community holds events at DEF CON each year. With more exposure and education, data and security practitioners are likely to improve the security of their deployed machine learning systems.ĪI Village is a community of data scientists and hackers working to educate on the topic of artificial intelligence (AI) in security and privacy. While this team consists of experienced security and data professionals, they recognized a need to develop ML security talent across the industry. To proactively test and assess the security of NVIDIA machine learning offerings, the NVIDIA AI Red Team has been expanding.

The competition proved to be a valuable opportunity for participants to develop and improve their machine learning security skills. It aimed to introduce attendees to each other and to the field of ML security. Hosted by AI Village, the competition drew more than 3,000 participants. How, then, should interested practitioners begin developing machine learning security skills? You could read related articles on arXiv, but how about practical steps?Ĭompetitions offer one promising opportunity. NVIDIA recently helped run an innovative ML security competition at the DEF CON 30 hacking and security conference. While the state-of-the-art moves forward, there is no clear onboarding and learning path for securing and testing machine learning systems. It exists at the intersection of the information security and data science domains. Machine learning (ML) security is a new discipline focused on the security of machine learning systems and the data they are built upon.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed